I have been musing a bit about combinatory and probabilistic

logic, and what they have to teach us philosophically. It’s perhaps an obvious sort of point, but

to me it’s interesting to reflect on these basic foundations and try to

understand what are the core, minimum requirements for being able to perform

computations and think about the world.

The conclusion of my musing here will be list of

commonsensical semantic primitives, so that, roughly speaking: Any mind that

has mastered these, should be able to do universal computing and universal

probabilistic logic … limited only by its available resources.

The Crux of

Combinatory Logic

Let us start with computation; and what is in a sense the

simplest formalism for universal computation, combinatory logic.

One thing combinatory logic tells us is that to do any kind

of computation, all we need is to be able to do the following things:

- · Sequential ordering: arranging two or more things in a list with a specific order

- · Grouping: drawing a boundary around a subsequence of two or more things, to distinguish the subsequence from other things before or after it

- · Insertion: draw an arrow connecting one entity or sequence, with a position in another sequence

- · Removal: note that one entity or sequence, consists of a certain other sequence with its {beginning, middle or end} removed. We could decompose this into the primitive concepts of beginning middle and end; and the primitive concept of removal.

- · Action: Actually carry out an insertion (placing one entity in a certain position in another sequence) or removal (create a copy of a sequence with its beginning, middle or end removed)

An elegant way to represent insertion and removal is to

think about there as being four sorts of arrows: simple arrows,

remove-beginning arrows, remove-middle arrows and remove-end arrows. Enacting a remove-end arrow pointing from a

sequence to a position in another sequence, results in said position receiving

a version of the first sequence with its end removed.

This set of basic operations is enough to do general-purpose

computation. To see, this, note that

the S and K combinators, defined as

K x y = x

S x y z = (x y) (x z)

are enough to do general-purpose computation. For,

·

K is just a remove-end arrow

·

S is a sequencing of a grouping of a remove-end

arrow, and a grouping of a remove-middle arrow

(Note that, according to the sufficiency of S and K,

remove-beginning is not strictly necessary; we can make do with just

remove-middle and remove-end, and derive remove-beginning therefrom.)

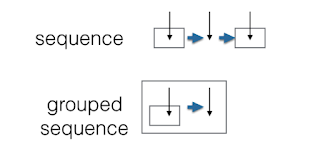

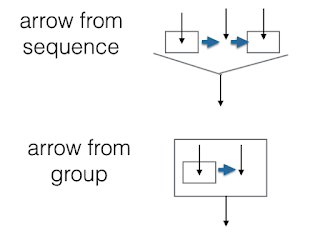

We can depict this graphically by adopting some simple

conventions:

- · Entities written on a horizontal row, connected by thick arrows pointing from left to right, are considered as a sequence to be read from left to right

- · Arrows denoting insertion point down

- · Removals are represented as arrows labeled by –e (remove end), -m (remove middle) or –b (remove beginning)

- · A star indicates the result of doing an action (where an action is an arrow)

- · Grouping of a subsequence is indicated by drawing a box around the subsequence

- · An arrow pointing into a box, means that upon enaction, the result of the action denoted by the arrow is placed into the box

- · An arrow pointing from a bracket around a subsequence, takes the bracketed subsequence as input when enacted

- · An arrow pointing from a grouping (box) around a subsequence, takes the box and its contents as input when enacted

The following diagrams illustrate these conventions:

In this formalism, an arbitrary computation can be

represented as a series of rows, where each row consists of a sequence of boxes

(some containing other sequences of boxes) or spaces; with each lowest-level

box and each space containing the pointy end of an arrow, whose root is some

box or bracketed subsequence on the row above.

We can support concurrency in this formalism by interpreting

two sequences A and B so that neither A nor B is below each other, as

concurrently enactable. We say that X is

immediately below Y if there is an arrow pointing from somewhere in X to

somewhere in Y. We say that X is below Y

if you can reach Y from X by following a series of immediately-below

relationships.

Conceptually, and to be a bit repetitive, what this means

is: to do ANY computation whatsoever, all you need to be able to do is…

- · Arrange things in a linear sequence

- · Put a boundary around a subsequence, to mark it off from the rest of the sequence

- · Indicate to yourself that one sequence (with a boundary or not) should be inserted in a certain place in another sequence

- · Indicate to yourself that one sequence is obtained by removing the beginning, middle or end of some sequence

- · Enact some insertion or removal that you have conceptualized

That’s it. In a very basic sense, that’s what computing

is.

Boolean and

Probabilistic Logic

Now, none of the above tells us what things should be

arranged in sequence, or grouped in boxes, in the first place. Combinatory

logic as formulated above can only do purely mathematical computing. To do computing relative to some body or

other practical system, we need to add at very least one more primitive:

- · Enact some primitive action, based on some sequence of (primitive or composite) perceptions

To handle perceptions and primitive actions elegantly, however, it seems this is not

enough. It seems very useful to add a

few additional components to our basic model of computation, to wit:

- · Un-ordered grouping (sets)

- · Join (on sets)

- · Direct product (on sets)

- · Set difference

- · Comparison (a partial order operation)

- · Valuation: mapping sets of primitive perceptions into numbers

As Knuth and Skilling have shown if we add these operations,

then we get not only Boolean logic, but also, fairly directly, probability

theory. If we let the valuation map into

pairs of real numbers instead of single real numbers, then we get quantum

probability theory.

On the other hand, what Schonfinkel showed when originally

formulating combinatory logic, was that he could emulate quantifier logic using

combinatory logic simply by adding one more combinator, the U combinator. What the U combinator does is says “UAB means

not both A and B.” But this comes

immediately as a side-effect of the set operations introduced just above. So if we put the basic combinator operations

together with the basic lattice operations, we get quantifier logic.

So we then get general-purpose probabilistic-logical

computation.

Graphically, to represent these additional algebraic

operators, we would add the potential for unordered grouping. For instance, in this diagram

the outputs of the first two arrows can be in either order

inside their grouping; and this grouping can be in either order as compared to

the third arrow. The left-to-right

ordering on the page has no semantics here.

One would then add join (+) and direct product (x) operators

between unordered groups. Unordered

groups can contain sequences;a nd sequences can contain unordered groups; or

operator expressions built up from unordered groups using + or x. A box around an unordered group may be used

to allow + or x to act on that group as a whole.

(To emphasize, these additions are not strictly needed,

because pure combinatory logic can be used to emulate these lattice

operations. I just think the latter is

counterintuitive and messy, and it’s better to add the lattice operations as

primitives. This is certainly

debatable.)

So, to be a bit repetitive again -- philosophically, what

this suggests is that to do computing nicely about observations in a world, you

also want to have a few other processes at your disposal besides those strictly

required for computation:

- · The ability to group things into unordered sets

- · The ability to join two sets together

- · The ability to combine two sets (by combining each element of one, with each element of the other)

- · The ability to compare two sets, to see if one is part of the other

- · The ability to measure how big a set is – i.e. to map sets to numbers representing “size”, in a way that works nicely with the above set operations

- · The ability to compare two size-measurements, so as to say if one set is bigger than another

These basic operations let you do probabilistic logic; and

put together with combinators, they let you do quantifier logic … and this

logic can then interoperate complexly with computation.

Musing a Bit

One nice thing is that all these operations are pretty

simple and natural conceptually. They are not weird, obscure mathematical

operations. They are all quite basic,

intuitive operations.

Looking at these basic operations, it’s not hard to see how

the ability for general-purpose computation and logical reasoning would emerge

from simpler organisms lacking this

ability. Each of the basic operations

needed to support computation or reasoning seems likely to be useful in

isolation, in various situations. The

ability to identify middles and ends, or the ability to insert sequences into

other sequences, or the ability to mentally join together two sets of

observations, etc. – each of these things is going to be useful in practice for

many organisms that cannot do general logic or computation. But then once these basic capabilities have

evolved, due to their partly-separate, partly synergetic-in-various-groupings,

utility … then they can come together to do general-purpose computation and

logic … and then the utility of this general-purpose logic will reinforce all

these capabilities and cause them to pass on through the generations.

Thinking about general intelligence, it seems that if we

wanted to take a reasonably good stab at making an unbiased sort of general

intelligence, we would make a system that tried to learn concise programs

computing patterns in its observations, using a programming language comprising

the simple operations enumerated above.

Such an AGI would aim to learn patterns in the world that were as simple

as possible, when expressed in terms: of basic lattice operations,

corresponding numerical size comparisons; and basic sequence groupings,

insertions and beginning/middle/end removals.

In terms of teaching baby AGIs, it would perhaps be wise to

include in the curriculum lessons focused on each of the elementary operations

mentioned above. Via combining together

practical facility with these elementary operations, quite general practical

facility becomes possible.

Semantic Primitives

Finally, it is a little bit interesting to take these basic

mathematical operations and reframe them less formally as semantic primitives.

The verbal primitives we arrive at, from the above math

operations, look like:

- · Before

- · After

- · Together with

- · Put into

- · Take out of

- · Beginning

- · Middle

- · End

- · Do

- · Or

- · Combine with

- · More than

- · Graded indication of how much more than

Every human language, so far as I know, contains good methods

of referring to all of the above except for perhaps the latter. The latter I suspect is handled gesturally

and/or in terms of phonological variation, in languages which have limited

verbal mechanisms for expressing magnitudes.

At a basic level, it is mastery of these semantic primitives

that enables universal computation and universal uncertain reasoning.

An Alternative

Language for Automated Programming?

These considerations also suggest a concise elementary

programming language, a bit different from existing ones. What we would have are:

- · Basic types for: primitive action, primitive perception, list, set, group

- · The notion of an arrow from a set, group, list or sub-list to a specific location in another list

- · The notion of removing the beginning, middle or end of a list (or, for good measure, perhaps removing any specified sub-list between positions m and n in a list).

- · The notion of enacting a set or list (thus indirectly enacting some primitive actions, after following some arrows)

- · Join and direct-product as set operations

- " "Greater than" and "less than", for sets based on inclusion, and for numbers produced as probabilities

- Addition and multiplication of probabilities; and evaluation of probabilities via normalizing set sizes

Such a language would not be very suitable for human

programming, but could be an interesting alternative for automated programming

such as evolutionary learning or probabilistic program inference. It would seem amenable to a highly efficient

implementation, leveraging nice data structures for holding and manipulating sets

and lists.

3 comments:

Something similar to these semantic primitives is now being displayed during the operation of ghost175.pl in Perl and Mind.Forth in Win32Forth.

Tiny acorns

Thanks for sharing this information if you want to read about mba deadlinesthen do please visit our blog.

Post a Comment